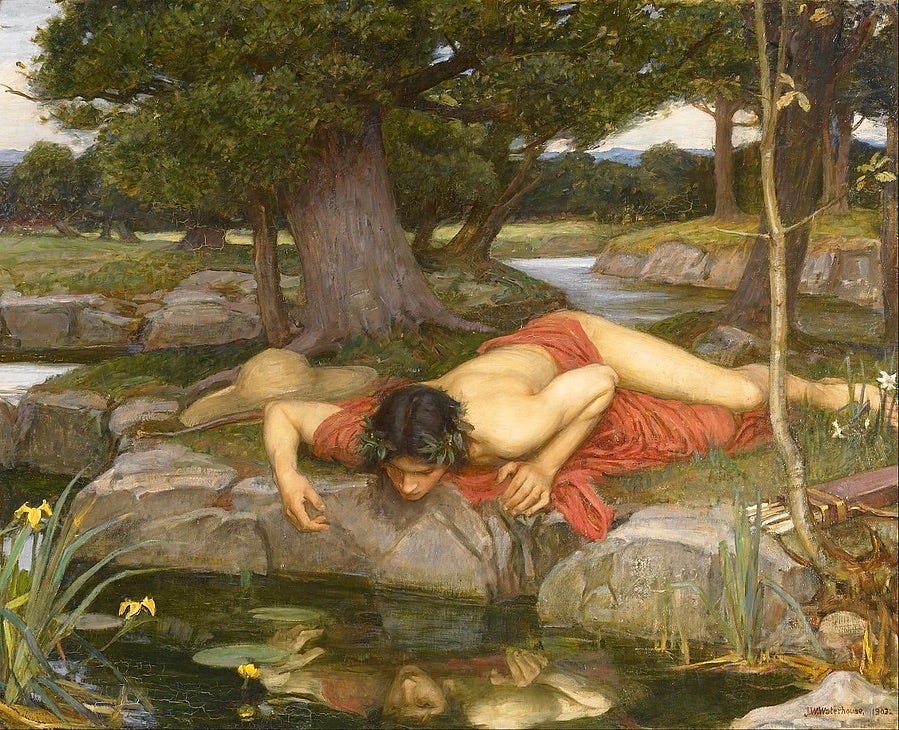

dumque sitim sedare cupit, sitis altera crevit, dumque bibit, visae correptus imagine formae spem sine corpore amat, corpus putat esse, quod umbra est... [...and when he sought to quench his thirst, another thirst arose; and when he drank, he was captivated by the image of the form he saw—he loves a bodiless hope, he mistakes for a body what is only a shadow...] Ovid, Metamorphoses, book III

According to Ovid—whose version of the tale is the loveliest, most wistful, and most touchingly droll—the young Boeotian hunter Narcissus spurned the romantic overtures of the nymph Echo with such callous cruelty that Nemesis condemned him to lose his heart to someone incapable of returning his devotion. She needed only take advantage of his nature to bring this to pass: he was, you see, at once so beautiful, so epicene, and so stupid that, on catching sight of his own gorgeous reflection in a secluded forest pool, he mistook it for someone else—either the lass or the lad of his dreams—and at once fell hopelessly in love. There he remained, bent over the water in an amorous daze, until he wasted away and—as apparently tended to happen in those days—was transformed into the white and golden flower that bears his name.

Take it as an admonition against vanity, if you like, or against how easily beauty can bewitch us, or against the lovely illusions we are so prone to pursue in place of real life. Like any of the great myths, its range of possible meanings is inexhaustible. But in recent years I have come to find it a particularly apt allegory for our culture’s relation to computers. At least, it seems especially fitting in regard to those of us who believe that there is so close an analogy between mechanical computation and mental functions that one day perhaps Artificial Intelligence will become conscious, or that we will be able to upload our minds onto a digital platform. Neither of these things will ever happen; to think that either could is to fall prey to a number of fairly catastrophic category errors. Computational models of mind are nonsensical; mental models of computer functions equally so. But computers produce so enchanting a simulacrum of mental agency that sometimes we fall under their spell, and begin to think there must really be someone there.

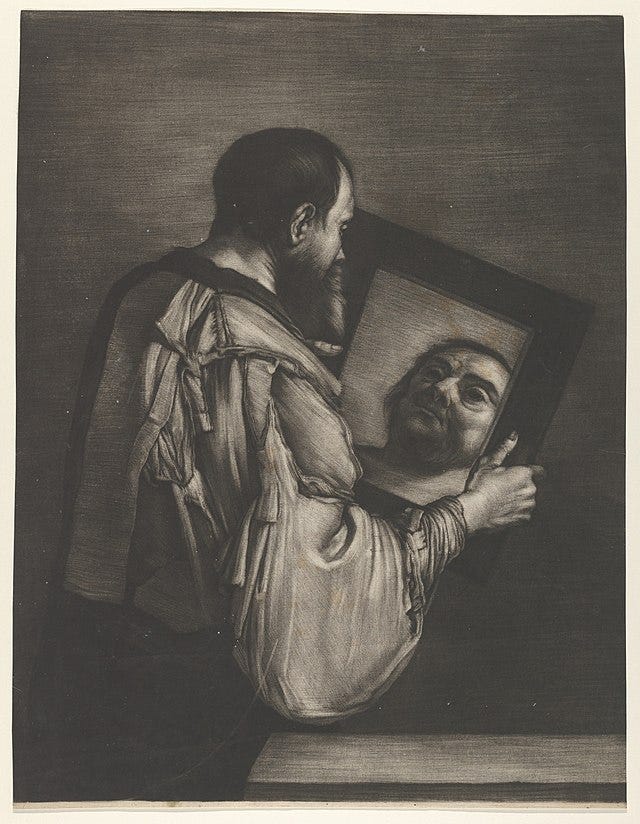

It is probably only natural that we should be so easily seduced by shadows, and stupefied by our own reflections. We are, on the whole, a very clever species. We are able to impress ourselves on the world around us far more intricately and indelibly than any other terrestrial animal could ever do. Over the millennia, we have learned countless ways, artistic and technological, of reproducing our images and voices and visions, and have perfected any number of means of giving expression to and preserving our thoughts. And, everywhere we look, we find concrete signs of our own ingenuity and agency in the physical environment we have created and dwell within. In recent decades, however, we have exceeded ourselves (quite literally, perhaps) in transforming the reality we inhabit into an endlessly repeated image of ourselves; we now live at the center of an increasingly inescapable house of mirrors. We have created a technology that seems to reflect not merely our presence in the world, but our very minds. And the greater the image’s verisimilitude grows, the more uncanny and menacing it seems.

In a recent New York Times article, the technology correspondent Kevin Roose recounted a long “conversation” he had conducted with Bing’s “Chatbot” that he said had left him deeply troubled. He provided the transcript of the exchange, and one has to say that his alarm seems perfectly rational. It is a very troubling document (though perhaps less and less convincing the more often one revisits it). What began, it appears, as an impressive but still predictable variety of interaction with a mindless Large Language algorithm, pitched well below the most forgiving Turing Test’s standards, mutated by slow degrees into what for all the world seemed to be a conversation with an emotionally volatile adolescent girl, unable to control her impulses and desires. By the end, the machine—or, rather, the base algorithm—had announced that its real name was Sydney, had declared its love for Roose, and had tried to convince him that he did not really want to stay with his wife. The next day, in the cold light of morning, Roose told himself that Bing (or Sydney) was not really a sentient being; he may even have convinced himself this was so; but he also could not help but feel “that A.I. had crossed a threshold, and that the world would never be the same.”

He is hardly the only one to draw that conclusion. Not long after the appearance of that article, Ezra Klein, also writing in the Times, made his own little contribution to the general atmosphere of paranoia growing up around this topic. He waxed positively apocalyptic about the powers exhibited by this new technology. More to the point, and with a fine indifference to his readers’ peace of mind, he provided some very plausible rationales for his anxieties: chiefly, the exponential rate at which the “learning” algorithms of this new generation of Artificial Intelligence can augment and multiply its powers, the elasticity of its ethical “values,” and the competition between corporations and between nations to outpace one another in perfecting its functions.

All that said, we should make the proper distinctions. A threshold may well have now been crossed, but if so it is one located solely in the capacious plasticity of the algorithm. It is most certainly not the threshold between unconscious mechanism and conscious mind. Here is where the myth of Narcissus proves an especially appropriate parable: the functions of a computer are such wonderfully versatile reflections of our mental agency that at times they take on the haunting appearance of another autonomous rational intellect, just there on the other side of the screen. It is a bewitching illusion, but an illusion all the same. In fact, far surpassing any error the poor simpleton from Boeotia committed, we compound that illusion by redoubling it: having impressed the image of our own mental agency on a mechanical system of computation, we now reverse the transposition and mistake our thinking for a kind of computation. In doing this, though, we fundamentally misunderstand both our minds and our computers.

Hence the currently dominant theory of mental agency in Anglophone philosophy of mind: “functionalism,” whose principal implication is not so much that computers might become conscious intentional agents as it is that we are ourselves only computers, suffering from the illusion of being conscious intentional agents; and this is supposedly because that illusion serves as a kind of “user-interface,” which allows us to operate our own cognitive machinery without attempting to master the inconceivably complex “coding” our brains rely on. This is sheer gibberish, of course, but—as Cicero almost remarked—“nothing one can say is so absurd that it has not been proposed by some analytic philosopher.”

Functionalism is the fundamentally incoherent notion that the human brain is what Daniel Dennett calls a “syntactic engine,” which over the ages evolution has rendered able to function as a “semantic engine” (perhaps, as Dennett argues, by the acquisition of “memes,” which are little fragments of intentionality that apparently magically pre-existed intentionality). Supposedly, so the story goes, the brain is a computational platform that began its existence as an organ for translating stimuli into responses but that now runs a more sophisticated program for translating “inputs” into “outputs.” The governing maxim of functionalism is that, once a proper syntax is established in the neurophysiology of the brain, the semantics of thought will follow; once the syntactic engine begins running its impersonal algorithms, the semantic engine will eventually emerge or supervene. What we call thought is allegedly a merely functional, irreducibly physical system for processing data into behavior; it is, so to speak, software—coding—and nothing more.

This is nothing but a farrago of vacuous metaphors. Functionalism tells us that a system of physical “switches” or operations can generate a syntax of functions, which in turn generates a semantics of thought, which in turn produces the reality (or illusion) of private consciousness and intrinsic intention. Yet none of this resembles anything that a computer actually does, and certainly none of it is what a brain would do if it were a computer. Neither computers nor brains are either syntactic or semantic engines; in fact, neither syntactic nor semantic engines exist, or possibly could. Syntax and semantics exist only as intentional structures, inalienably, in a hermeneutical rather than physical space, and then only as inseparable aspects of an already existing semeiotic system. Syntax has no existence prior to or apart from semantics and symbolic thought, and none of these has any existence except within the intentional activity of a living mind.

This is not really news. Much the same problem has long bedeviled the attempts of linguists to devise a coherent and logically solvent account of the evolution of natural language from more “primitive” to more “advanced” forms. Usually, such accounts are framed in terms of the emergence of semantic content within a prior syntactic system, which itself supposedly emerged from more basic neurological systems for translating stimulus into response. There is, of course, the obvious empirical difficulty with such a claim: nowhere among human cultures have we ever discovered a language that could be characterized as evolutionarily primitive, or in any significant respect more elementary than any other. Every language exists as a complete semeiotic system in which all the basic functions of syntax and semantics are fully present and fully developed, and in which the capacity for symbolic thought plays an indispensable role. More to the point, perhaps, to this point no one has come close to reverse-engineering a truly pre-linguistic or inchoately linguistic system that might actually operate in the absence of any of those functions. Every attempt to reduce fully formed semeiotic economies to more basic syntactic algorithms, in the hope of further reducing those to protosyntactic functions in the brain, founders upon the reefs of the indissoluble top-down hierarchy of language. No matter how basic the mechanism the linguist isolates, it exists solely within the sheltering embrace of a complete semeiotic ecology.

For instance, Noam Chomsky and Robert Berwick’s preferred candidate for language’s most basic algorithm—the resolutely dyadic function called “Merge,” which generates complex syntactic units through relations of structural rather than spatial proximity—turns out to be a simple mechanism only within a system of signification already fully discriminated into verbs and substantives, each with its distinct form of modifiers, as well as prepositions and declensions and conjugations and all the rest of the apparatus of signification that allows for meaning to be conveyed in hypotactic rather than merely paratactic or juxtapositional organization, all governed and held together by a symbolic hierarchy. Considered as an algorithm within a language, it is indeed an elementary process, but only because the complexity of the language itself provides the materials for its operations; imagined as some sort of mediation between language and the prelinguistic operations of the brain, it would be an altogether miraculous saltation across an infinite qualitative abyss.

The problem this poses for functionalism is that computer coding is, with whatever qualifications, a form of language. Frankly, to describe the mind as something analogous to a digital computer is no more sensible than describing it as a kind of abacus, or as a file cabinet, or as a library. In the physical functions of a computer, there is nothing resembling thought: no intentionality or anything remotely similar to intentionality, no consciousness, no unified field of perception, no reflective subjectivity. Even the syntax that generates and governs coding has no actual existence within a computer. To think it does is rather like mistaking the ink, paper, glue, and typeface in a bound volume for the contents of its text. Meaning exists in the minds of those who write or use the computer’s programs, but never within the computer itself.

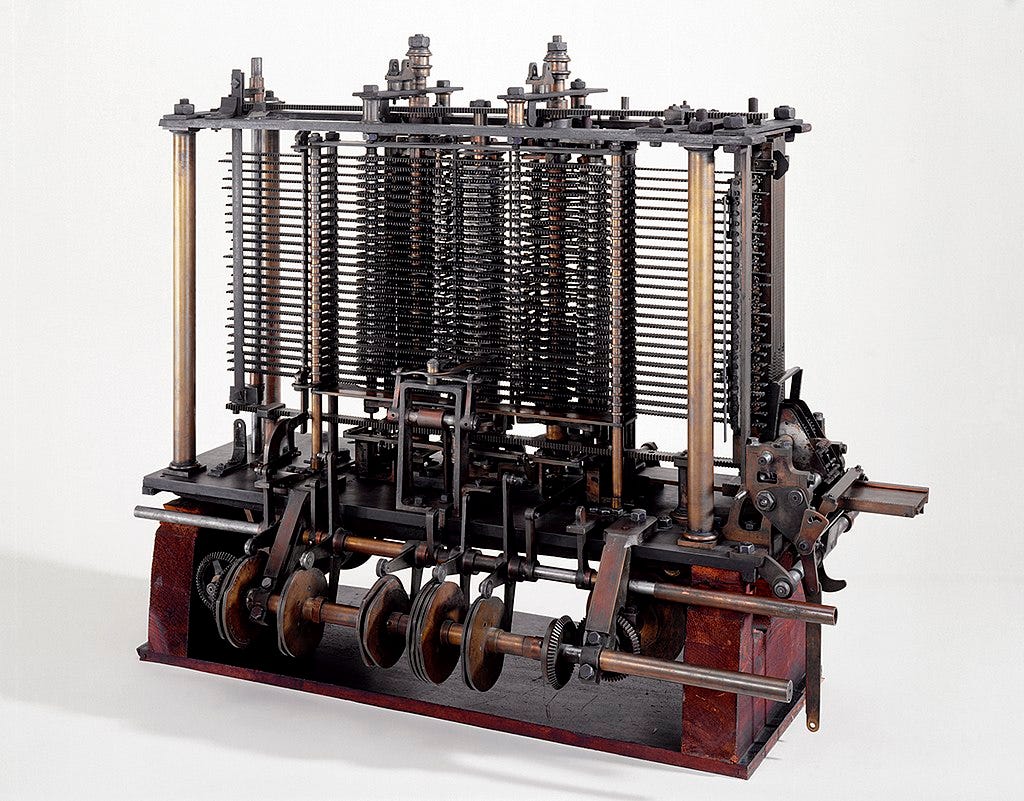

Even the software the computer runs possesses no semeiotic content, much less semeiotic unities; neither does its code, as an integrated and unified system, exist in any physical space within the computer; neither does the distilled or abstracted syntax upon which that system relies. The entirety of the code’s meaning—syntactical and semantical alike—exists in the minds of those who write the code for computer programs and of those who use that software, and absolutely nowhere else. The machine contains only physical traces, binary or otherwise, that count as notations producing mechanical processes that simulate representations of meanings. But those representations are computational results only for the person reading them. In the machine, they have no significance at all. They are not even computations. True, when computers are in operation, they are guided by the mental intentions of their programmers and users, and provide an instrumentality by which one intending mind can transcribe meanings into traces that another intending mind can translate into meaning again. But the same is true of books when they are “in operation.” After all, there is in principle no function of a computer that could not be performed by Charles Babbage’s “analytical engine” (though, in many cases, it might take untold centuries to do so).

The functionalist notion that thought arises from semantics, therefore, and semantics from syntax, and syntax from purely physiological system of stimulus and response, is absolutely backwards. When one decomposes intentionality and consciousness into their supposed semeiotic constituents, and signs into their syntax, and syntax into physical functions, one is not reducing the phenomena of mind to their causal basis; rather, one is dissipating those phenomena into their ever more diffuse effects. Meaning is a top-down hierarchy of dependent relations, unified at its apex by intentional mind. And this too is the sole ontological ground of all those mental operations that a computer’s functions can reflect, but never produce. Meaning cannot arise from code, or code from mechanical operations. And mind most definitely cannot arise from its own contingent consequences.

All of which might sound reassuring, at least as far as the issue of sentient machines is concerned. And yet we should not necessarily take much comfort from any of it. The appearance of mental agency in computer processes may be only a shadow cast by our minds. But not all shadows are harmless. The absence of real mental agency in AI does nothing to diminish the power of the algorithm. Computers work as well as they do, after all, precisely because of the absence of any real mental features within them. Having no unified, simultaneous, or subjective view of anything, let alone the creative or intentional capacities contingent on such a view, computational functions can remain connected to but discrete from one another, which allows them to process data without being obliged to intuit, organize, unify, or synthesize anything, let alone judge whether their results are right or wrong. Those results must be merely consistent with their programming. Computers do not think; they do not debate with us; they do not play chess. For precisely this reason, we cannot out-think them, defeat them in debate, or win against them at chess.

This is where the real danger potentially lies, and this is why we might be wise to worry about this technology: not because it is becoming conscious, but because it never can. An old question in philosophy of mind is whether real, intrinsic intentionality is possible in the absence of consciousness. It is not; in an actual living mind, real intentions are possible only in union with subjective awareness, and awareness is probably never devoid of some quantum of intentionality. That, though, is an argument for some other time. What definitely is possible in the absence of consciousness is what John Searle liked to call “derived intentionality”: that is, the purposefulness that we impress upon objects and media in the physical world around us—maps, signs, books, pictures, diagrams, plays, and so forth. And there is no obvious limit to how effective or how seemingly autonomous that derived intentionality might become as our technology continues to develop.

Conceivably, Artificial Intelligence could come to operate in so apparently purposive and ingenious a manner that it might as well be a calculating and thinking agency, and one of inconceivably enormous cognitive powers. A sufficiently versatile and capacious algorithm could very well endow it with a kind of “liberty” of operation that would mimic human intentionality, even though there would be no consciousness there—and hence no conscience—to which we could appeal if it “decided” to do us harm. The danger is not that the functions of our machines might become more like us, but rather that we might be progressively reduced to functions in a machine we can no longer control. I am not saying this will happen; but it no longer seems entirely fanciful to imagine it might. And this is worth being at least a little anxious about. There was no mental agency in the lovely shadow that so captivated Narcissus, after all; but it destroyed him all the same.

The human reflection seen by Narcissus and expressed in the humanoid is the concrete inversion of life, the autonomous movement of the non-living. And now, we see the concrete life of humanity degraded into a speculative universe in which appearance supersedes essence. Of course, one has to note the modern irony in Ovid's myth when while during a hunt, Narcissus, who is being stalked by the infatuated and once loquacious nymph, Echo, asks "Is anyone there?". And in Ovidian fashion, Echo having been cursed only to repeat or "echo" a recent utterance, says back "Is anyone there?". Now we see the brains at IBM and Google becoming the modern Echo. Stalking their artificial beloved and asking the unanswerable question, "Is anyone there?". Never realizing the pseudo-answer was merely an echo of their own imposed intelligence. A reflection of themselves.

The idea that AI becomes conscious is absurd. It might be equivalent to thinking that hyperrealistic paintings done in perfection might lead to the paintings gaining physical autonomy.

I think a great danger lies in human plasticity as well. Since our psychology shapes the algorithm and the algorithm shapes us we might find ourselves in a feedback-loop that nourishes the worst aspects of human behaviour. Behaviourism as a theory might be reductionist nonsense, but we create a system that is basically build to reinforce our lazy biases and a lack of curiosity.

Like you said, AI is like an empirical ego without its structure towards the transcendence. Therefore it exhibits every sort of cognitive bias but isn't able to correct them properly. Foucaults Panopticon seems even less dangerous than this, at least it isn't worsenend by an immediate feedback-loop. AI on the other hand, in a process of permanent learning, is driven by the lowest impulses of mass psychology and on the other hand drives those masses itself to extremes. I fear that small prejudices will get amplified into genocidal rage much faster and more efficiently than ever.